Mark E. Whiting

I’m Chief Scientist at Pareto and a research fellow at the CSSLab at the University of Pennsylvania. I believe that learning to be better together is the single most important thing we can do to facilitate our longterm success; as individuals, and as a species.

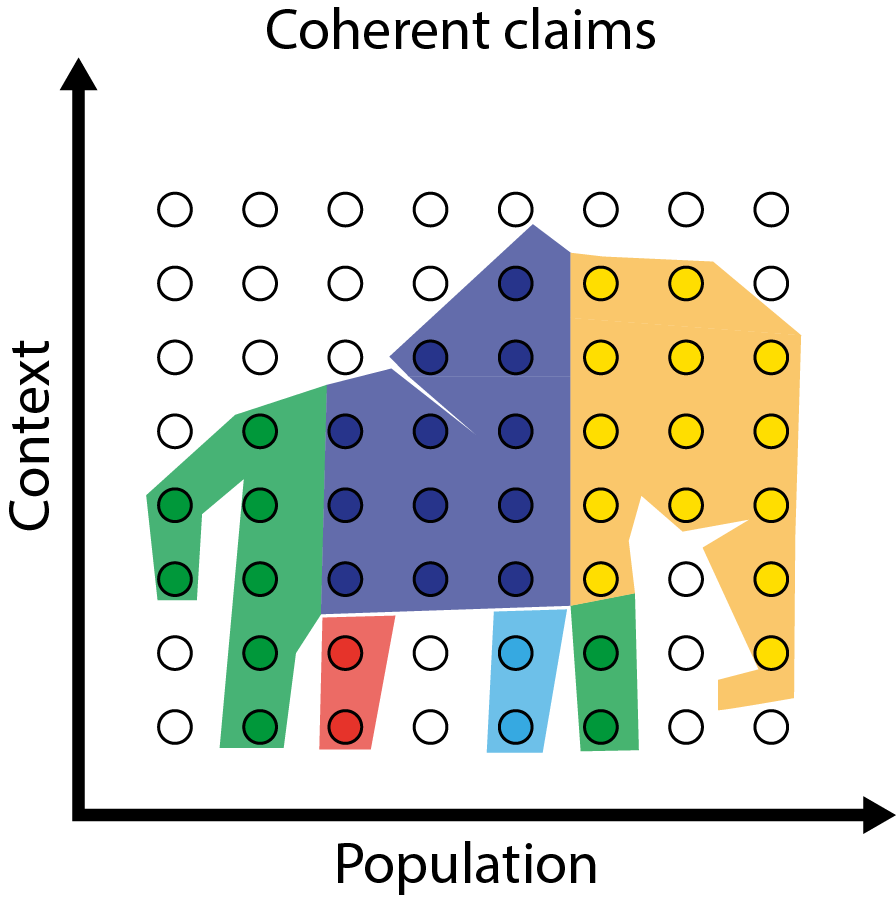

Quantifying common sense

Quantifying common sense

Integrative experiments

Integrative experiments

COVID dashboards

COVID dashboards

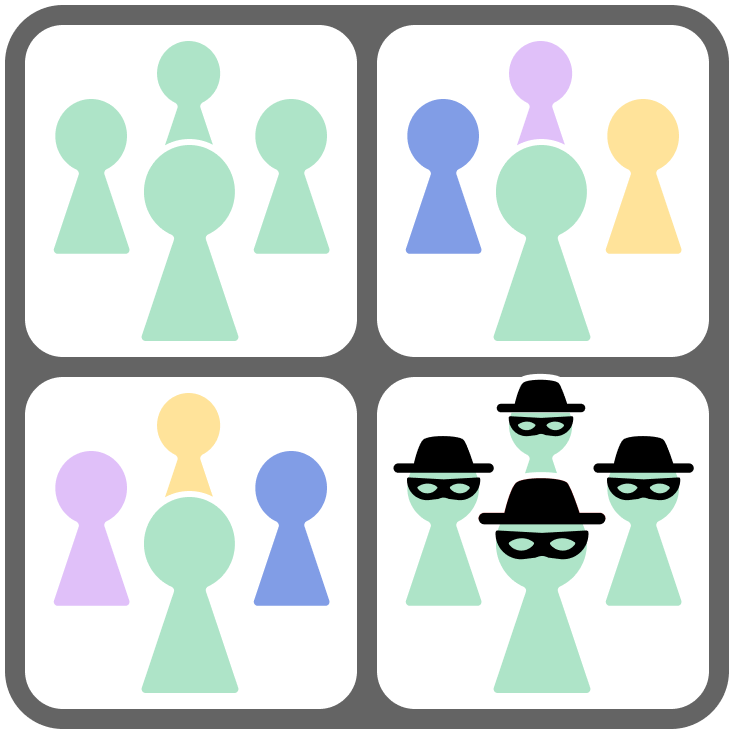

Team fracture

Team fracture